Explore how AI optimizes the software development lifecycle and enhances it with AI-driven development.

Most teams searching for AI SaaS development services aren’t actually shopping for AI. They’re shopping for the confidence that the agency they pick has shipped enough real AI products to know which features earn their engineering cost and which are just chatbots dressed up in a dashboard. The phrase “AI SaaS” has quietly stopped meaning anything specific. A todo list with a summarize button calls itself AI SaaS now. So does a multi-agent platform doing $50K worth of inference per month per customer. Same words, completely different builds, completely different agencies needed.

Here’s a buyer-side framework for evaluating AI SaaS development services in 2026: how the work actually breaks down, the model choice you’re inheriting whether you realize it or not, what realistic budgets and timelines look like, and the red flags that should send you to the next pitch.

Table of Contents

AI SaaS development services span the full work of building a software product where artificial intelligence is part of the product’s core value, not a chatbot widget bolted on the side. That distinction matters more than most pricing pages admit.

There are two builds people call “AI SaaS” and they cost wildly different amounts:

Both are legitimate, but they need different architectures, different model strategies, and different agencies. If an agency proposes the same build process for both, that’s the first signal to slow down.

A real AI SaaS build covers seven layers: product discovery and AI opportunity mapping, system architecture, backend infrastructure, the model and data pipeline, frontend and dashboards, billing and usage tracking, plus deployment with monitoring. Most service pages will list the first three and skip the rest. The skipped ones are where projects bleed money.

When we scope an AI SaaS at PixlerLab, the work breaks down roughly like this:

| Phase | What happens | Time share |

|---|---|---|

| Discovery and AI mapping | Define the actual problem, identify where AI earns its complexity, map data sources |

10 to 15% |

| Architecture and model strategy | Pick the model, design the data pipeline, decide on multi-tenancy and security |

10 to 15% |

| Backend, APIs, infrastructure | Build the engine, set up AWS or Google Cloud, design for scale | 25 to 35% |

| Model layer and integration | Prompt engineering, tool use design, RAG setup, eval loops | 15 to 20% |

| Frontend and dashboards | The interfaces users actually touch | 15 to 20% |

| Billing, usage tracking, auth | Stripe, role-based access, subscription logic | 5 to 10% |

| Testing, deployment, monitoring | QA, observability, model drift checks, ongoing optimization | 10 to 15% |

The phase most agencies skip in their proposals is the eval loop. That’s the system you use to measure whether the AI is doing what it should over time. Without it, you ship the product, the model behavior drifts, the customer complains, and nobody can tell whether it’s a bad prompt, a bad input, or a bad model version. An AI SaaS without an eval setup is a SaaS that will quietly degrade and nobody will know why. This is what most teams get wrong on their first build.

If you hire an LLM-agnostic agency, you’re hiring an agency that hasn’t picked a side. That sounds neutral and balanced. In practice, it usually means they have surface-level experience with several model providers and deep experience with none. The model layer of an AI SaaS is not a swappable component, no matter what the marketing slide says. The way you write prompts, design tool use, set up retrieval, manage context, and structure your eval rubric is all model-specific in production.

At PixlerLab we build Claude-first, and we’ll tell you exactly why before you ask. Claude is unusually strong at multi-step instruction following, which matters when an AI feature has to read a document, classify it, pull data, and call the right tool reliably across thousands of users. Its long context window means agents can process whole documents or conversation histories in one pass, without the chunking workarounds that introduce errors. And its safety characteristics make it easier to deploy in regulated or enterprise contexts where unexpected outputs are not acceptable.

This is not neutral advice. It’s a position. If your use case favors a different model, like long-context cost optimization with Gemini or specific multimodal tasks with GPT-4o, the honest answer is that we’d say so, and we sometimes suggest founders shop elsewhere. The point isn’t that Claude wins every comparison. The point is that an agency without an opinion on this is an agency that hasn’t done enough production work to form one.

Budgets across the industry land in three rough tiers. Numbers below match what we’ve seen across our work and what credible cost guides published in 2026 are reporting:

Three numbers that surprise founders almost every time:

If you’re at the validation stage and not sure your idea is worth a $150K commitment, the saner first move is to build a Claude-powered MVP first, prove the AI feature pulls its weight, and then commit to the full platform.

Five things that should make you pause when reading an agency’s proposal:

A good agency will use specific words: prompt caching, function calling, tool use, RAG with pgvector or Pinecone, multi-tenant Postgres with row-level security, Stripe for billing, Anthropic Claude for the model layer, AWS or Google Cloud for infrastructure. Specificity is a proxy for production experience.

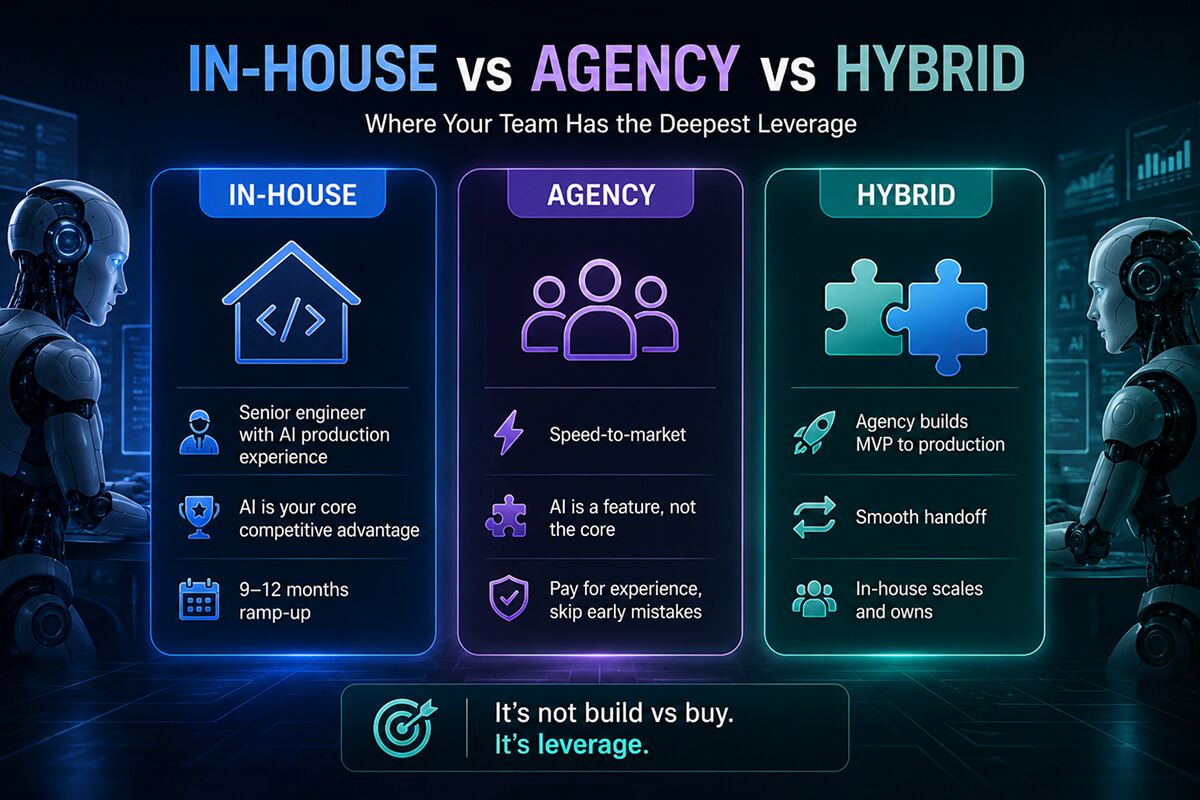

The build-vs-buy debate for AI SaaS gets framed wrong almost every time. The real question isn’t whether you “should” go in-house or hire. It’s about where your team has the deepest leverage. A short way to think about it:

Most of the founders we work with at PixlerLab start with hybrid in mind. They want a senior team that has shipped 15 or 20 AI SaaS platforms before, and a path to take ownership later. That structure usually saves both money and time compared to either pure model.

Two cases where the right answer is not a new AI SaaS:

If you’re a profitable B2B product trying to “add AI,” what you usually need is not a rebuild. You need a targeted feature integration where Claude becomes a function inside your existing product. Document summaries, intelligent search, draft generation. That work scopes more like a 4 to 8 week project, not a 4 month build.

If the real need is “our team spends 20 hours a week on this internal process,” what you want is AI workflow automation inside your existing tools, not a new SaaS product to sell. Different scope, different budget, faster ROI.

The honest test is this: would you sell this to customers, or do you just want to use it internally? The answer changes the entire engagement type.

We’ve shipped 20+ AI SaaS platforms with the same playbook. It looks like this:

If you’d rather skip the trial and error on this, here’s how we approach AI SaaS development. And for proof that we’ve shipped consumer-grade SaaS at speed before, our Aboutmii landing page builder build is a good reference for the velocity and design quality we aim for.

A focused production AI SaaS takes 3 to 5 months end to end with an experienced team. An MVP can launch in 6 to 10 weeks. Enterprise builds with compliance and complex integrations stretch to 6 to 12 months. The single biggest factor that extends timelines is scope creep during the build, not the AI work itself.

Realistic ranges are $25K to $60K for an MVP, $70K to $200K for a production build, and $200K to $500K+ for enterprise-grade work. Inference costs in production are a separate line item and can run $500 to $5,000+ per month at moderate scale. Agencies that quote a single number without showing the phase breakdown are not giving you a real estimate.

AI SaaS is built around AI as the core value. Remove the AI and the product doesn’t exist. SaaS with AI features is a working product that adds AI to improve an existing experience. Different architecture, different engineering effort, different agency fit. Most “AI SaaS” cost guides blur the two, which is why their numbers feel inconsistent.

It depends on the use case, but the choice locks in real architectural decisions, so it’s not as swappable as people pretend. Claude is strong on long-context reasoning, tool use, and safety, which makes it well suited for document-heavy or enterprise workflows. GPT-4 has broader ecosystem tooling. Open-source like Llama works when you need on-premise control. An agency with no opinion on this isn’t experienced enough.

Yes, and often the smarter move. Adding Claude as a feature layer to an existing SaaS, for document summaries, intelligent search, automated drafts, or AI agents inside the product, typically takes 4 to 8 weeks and avoids the cost of a full rebuild. Scope it the same way you’d scope any feature: what problem does it solve, what’s the success metric, what’s the failure mode.

Skipping the eval setup. Teams ship a working AI feature, the model behavior drifts over weeks or months, customers notice before the team does, and now nobody can debug it because there’s no measurement layer. A real AI SaaS has a system for evaluating output quality over time. If the agency proposal doesn’t mention this, that’s the single biggest red flag in the document.

The teams shipping good AI SaaS in 2026 aren’t the ones with the biggest budgets. They’re the ones who picked a partner with enough production experience to disagree with them when the scope was wrong, and who scoped the first build around the AI feature that actually earns its complexity. Everything else is theater.

If you’re at the point where the question is no longer whether to build an AI SaaS but how to scope the first version of one, talk to our team. We’ll either tell you what we’d build, or tell you honestly why a smaller MVP or a feature integration would serve you better first.

AI SaaS Architecture

Apr 17, 2026

Explore how AI optimizes the software development lifecycle and enhances it with AI-driven development.

AI SaaS Architecture

Mar 5, 2026

Explore how AI is transforming the software development process, offering insights into tools, methodologies, and practical applications.