Explore AI-powered software development trends, skills, and their impact on modern tech careers.

When it comes to ai powered software development services, getting the fundamentals right matters. Imagine cutting down your project timelines drastically while keeping quality top-notch. That’s the magic of AI-powered software development services. At PixlerLab, we’ve watched AI revolutionize our clients’ frameworks, automating repetitive tasks and delivering deep insights through analytics. So, why is integrating AI such a big deal for today’s businesses? The thing is, to stay ahead in this competitive market, you need constant innovation and efficiency. AI Development-from machine learning to natural language processing-well, it’s not just nice to have anymore, it’s essential for any forward-thinking strategy.

Table of Contents

Our AI solutions range from creating custom AI models to embedding AI tools into existing systems. This allows companies to automate workflows, make smarter choices, and significantly boost their operational intelligence. It’s not merely about the tech-it’s about reshaping business processes to be more agile and informed. At PixlerLab, we take these AI-powered solutions and tailor them to fit specific business needs. Well, actually that’s not quite right-what we mean is it fundamentally changes how businesses operate.

Traditional software development can feel like a drag because it relies heavily on manual processes and static codebases. These methods often lead to inefficiencies, higher costs, and longer time to bring products to market. Ever been stuck with tedious manual updates on a legacy system? Yep, that’s just one of the many hurdles. And with the explosion of data in the digital age, businesses need quicker, more precise ways to process information.

AI tackles these challenges head-on by automating solutions that cut down on human error and speed up workflows. AI models can sift through massive datasets to find patterns and insights-things that would take humans ages to uncover. Integrating AI into business processes boosts decision-making, fuels innovation, and gives companies a competitive edge. The move to AI isn’t about replacing human creativity-it’s about enhancing it, making processes smarter and more solid.

But how do you design AI-powered solutions with a smart system architecture? The core idea is creating an infrastructure that’s both flexible and flexible, ready to adapt to changing business needs and tech advances. It must smooth integrate AI tools with existing workflows, ensuring that new systems enhance rather than disrupt current operations.

Going for a modular design really helps with flexibility. By breaking down applications into microservices, each module can be deployed and scaled independently, making maintenance and upgrades a cinch. This approach allows system parts to evolve without affecting the entire application-making it perfect for AI integration. Plus, technologies like Docker can help enforce modular architecture by packaging applications into lightweight, portable containers.

APIs and middleware are essential players in connecting AI components with existing systems. They act as go-betweens, allowing different software applications to communicate, ensuring smooth data flow and functionality. Through these integration strategies, businesses can adopt AI capabilities easily, without needing to completely overhaul existing systems. API gateways can further optimize this approach by managing and monitoring multiple APIs smooth.

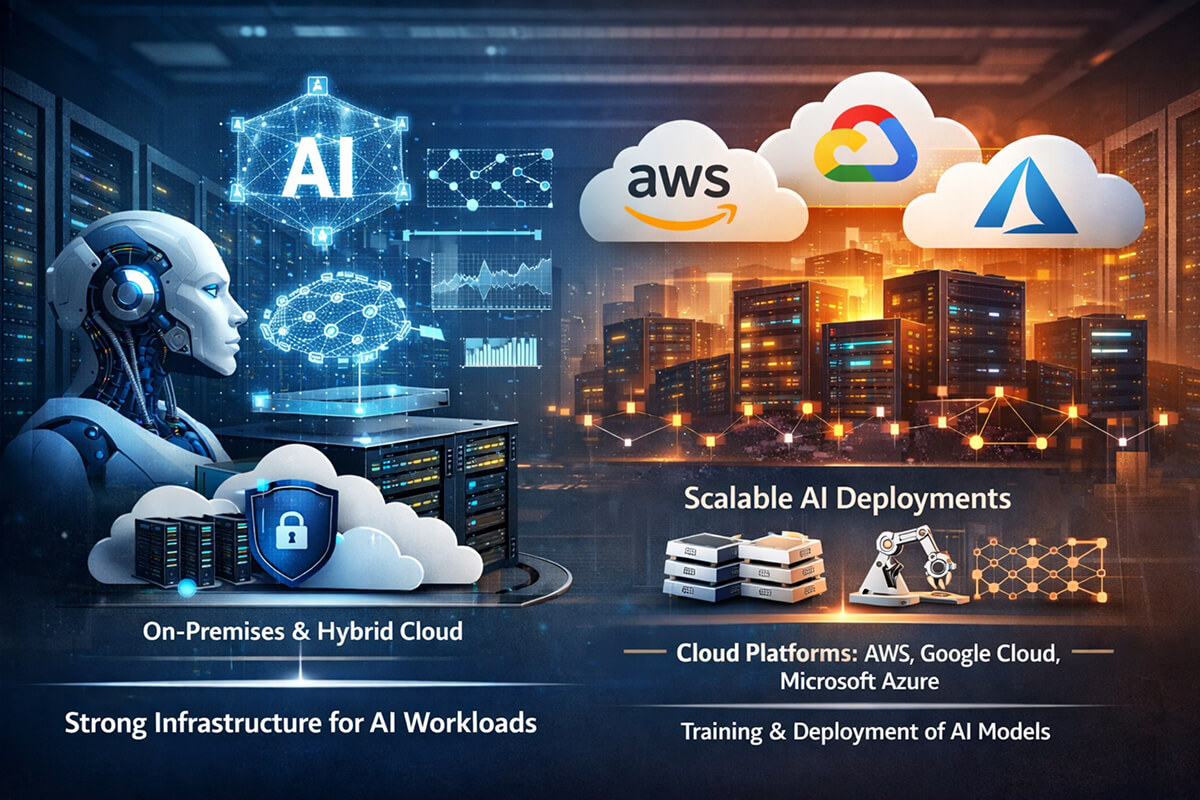

Strong infrastructure is key for supporting AI workloads. Cloud platforms like AWS, Google Cloud, or Microsoft Azure make scaling operations a breeze. They provide the computational resources needed for training and deploying AI models. We’ve used these platforms at PixlerLab to scale AI deployments effectively for our clients, ensuring performance remains consistent even when demand fluctuates. On-premises solutions with hybrid cloud strategies can also be explored for handling sensitive data.

Implementing AI solutions calls for careful planning and execution. The process involves several steps, each critical to ensuring successful AI technology deployment.

It’s crucial to be wary of common pitfalls in tool selection, such as picking an AI framework that lacks support or doesn’t fit with your current tech stack. Compatibility is key, and a mismatch can lead to unnecessary complications down the road.

Now, to a simple example of integrating an AI model using TensorFlow. Suppose we’re creating a model to classify images. This snippet gets to the heart of setting up a Convolutional Neural Network (CNN), a popular deep learning architecture for image classification tasks.

import tensorflow as tf

from tensorflow.keras import layers, models

# Define a simple CNN model

model = models.Sequential([

layers.Conv2D(32, (3, 3), activation='relu', input_shape=(64, 64, 3)),

layers.MaxPooling2D((2, 2)),

layers.Conv2D(64, (3, 3), activation='relu'),

layers.MaxPooling2D((2, 2)),

layers.Flatten(),

layers.Dense(64, activation='relu'),

layers.Dense(10, activation='softmax')

])

# Compile the model

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy'])

# Summary of the model

model.summary()

# Example data preparation

# (train_images, train_labels), (test_images, test_labels) = tf.keras.datasets.cifar10.load_data()

# Training the model

# model.fit(train_images, train_labels, epochs=10, validation_data=(test_images, test_labels))

This code snippet shows a basic CNN using TensorFlow. We import necessary modules and define a sequential model, consisting of convolutional layers followed by pooling, flattening, and dense layers. The output layer uses a softmax activation function for classification into ten categories. It’s compiled with the Adam optimizer, known for its efficiency and low memory requirements. This setup serves as a foundational model for image classification tasks. It’s important to note the added convolutional layer, which provides depth and complexity to the model, allowing it to learn more intricate features.

Choosing the right tech stack is crucial for the successful implementation and scalability of AI solutions. At PixlerLab, our go-to stack includes TensorFlow and PyTorch because of their versatility and solid community support.

TensorFlow excels in projects demanding flexibility and scalability, especially when deploying AI models in production environments. On the other hand, PyTorch is preferred for research-oriented endeavors due to its simplicity and dynamic computation graph. These tools offer extensive libraries and resources, making them ideal for a wide range of AI applications.

Beyond AI frameworks, databases like MongoDB and PostgreSQL efficiently handle data storage. They offer solid solutions for managing structured and unstructured data, a common requirement in AI projects. Cloud services, such as AWS or Google Cloud, deliver the computational resources needed for AI workloads. These technologies make AI solutions not only easy to deploy but also solid and flexible. Also, Kubernetes provides orchestration for deploying, managing, and scaling containerized applications, a vital feature for maintaining complex AI infrastructures.

Performance is a major consideration when deploying AI-powered software solutions. To truly benefit from AI, solutions must run efficiently with minimal latency. Our approach at PixlerLab focuses on optimizing both the AI models and the underlying infrastructure.

We use benchmarking tools to measure AI model performance, assessing latency, throughput, and resource utilization. These metrics provide insights into how well models function under different conditions and workloads. For instance, benchmarking tools like Apache JMeter or custom scripts can simulate load and measure response time, offering a clearer picture of performance bottlenecks.

Improving AI model efficiency involves techniques like model pruning and quantization. Pruning trims model size by removing weights that contribute little to the output, and quantization reduces the number of bits needed to represent each number. Together, these techniques speed up inference times without sacrificing accuracy. These optimizations are crucial for deploying AI models on resource-limited devices, enabling edge computing scenarios where latency and power consumption are critical.

In a recent project, we improved a client’s AI model’s performance by 30% using these techniques. This not only resulted in faster processing times but also reduced computational costs-showcasing real-world benefits of intelligent model optimization. These enhancements made it feasible to run sophisticated AI models in environments with limited computational resources.

AI development isn’t without its pitfalls. Understanding common mistakes can save developers time and frustration.

A frequent error is mishandling data, such as using low-quality or unrepresentative datasets. This can lead to inaccurate models and faulty predictions. Always ensure data is clean, relevant, and comprehensive. Techniques such as data augmentation and using synthetic data can help create more solid datasets.

Another issue is overfitting or underfitting models during training. Overfitting happens when a model learns the training data too well but can’t apply it to new data, while underfitting occurs when a model is too simple to capture underlying patterns. Regularization techniques, like dropout, and cross-validation are effective strategies to mitigate these issues. It’s also wise to iteratively test models and refine datasets to ensure balanced learning.

Choosing tools that aren’t well-suited to the project scope can lead to inefficiencies. It’s crucial to align chosen technologies with project goals and existing infrastructure. Misalignment can result in increased complexity and extended development times. Regular tool assessments and proof of concepts (PoCs) can help in making the right decisions.

AI-powered software finds diverse applications across industries, each uniquely benefiting from AI integration.

In retail, AI can optimize inventory management by predicting demand and streamlining stock levels, which helps reduce waste and improve customer satisfaction. For example, AI models analyze purchasing trends to advise on restocking times and quantities. Also, AI can personalize customer experiences by recommending products based on previous purchases and browsing behavior, enhancing customer engagement.

Now, in healthcare, AI enhances diagnostics and patient care, providing tools for analyzing medical data with greater accuracy and speed. For instance, AI algorithms can process medical images to spot abnormalities faster than traditional methods. Also, AI-powered predictive analytics can forecast patient outcomes and help create personalized treatment plans, improving overall healthcare delivery. In the finance sector, AI is key for risk assessment and fraud detection. By analyzing transaction patterns and customer behavior, AI systems can flag unusual activities in real-time, reducing the risk of financial fraud. AI also powers algorithmic trading, where it evaluates market conditions to make split-second decisions, optimizing trading strategies for maximum profitability.

AI-powered software development involves integrating artificial intelligence into software applications to automate processes, analyze data, and improve decision-making. It uses techniques like machine learning and natural language processing to enhance functionality and performance.

AI improves software services by automating repetitive tasks, offering predictive analytics, and enhancing data processing capabilities. This leads to more efficient operations, quicker insights, and improved customer experiences. AI systems can adapt and learn from new data, making them highly valuable in dynamic environments. Industries like healthcare, finance, retail, and logistics can benefit significantly from AI solutions. These sectors see improved efficiency, cost reductions, and enhanced decision-making capabilities. AI applications extend from operational optimizations to strategic insights, transforming the way businesses operate and compete.

While initial setup costs can be high, AI integration often results in significant cost savings over time through increased efficiency and productivity. Companies should weigh these long-term benefits against the upfront investment. Many AI solutions offer flexible pricing models, allowing businesses to start small and expand as benefits become apparent. Begin by defining your objectives and evaluating the areas where AI can offer the most value. Consulting with experts like PixlerLab can provide guidance on the best tools and strategies to use, helping you create a roadmap for AI integration tailored to your business needs.

Look, AI-powered software development isn’t just a futuristic concept anymore-it’s a reality that businesses are actively using to stay competitive. As AI continues to evolve, its integration into software development will only deepen, driving further innovation. Our team at PixlerLab is committed to helping businesses navigate this AI evolution by offering tailored solutions that meet their unique needs. We believe the future of AI in software development is bright, and we’re thrilled to be part of that journey.

If you’re considering AI solutions for your business, PixlerLab is here to help. We offer a free consultation to explore how AI-powered software can transform your operations. With our expertise, you’ll be well-equipped to implement advanced AI solutions that drive real results. Contact us today to get started on your AI transformation.

Claude Development

May 15, 2026

Explore AI-powered software development trends, skills, and their impact on modern tech careers.

Claude Development

May 8, 2026

Learn how to select the best AI MVP development agency for your startup and understand the importance of…

Claude Development

May 4, 2026

Explore how AI is transforming software development, enhancing efficiency with AI-powered solutions.