Learn how to select the best AI MVP development agency for your startup and understand the importance of…

When it comes to ai Powered Software Development specialization, getting the fundamentals right matters. AI-powered software development specialization is no longer just a trendy phrase-it’s actively reshaping the tech industry today. At PixlerLab, we’ve been right at the heart of this transformation. We’ve seen firsthand how AI has completely changed software development, bringing in new skills and strategies. This shift isn’t just about bringing in new technology; it’s about rethinking the very roles and expertise that exist within development teams. Turns out, as AI tools become more widely available, the hunger for AI-focused skills has skyrocketed. Developers are suddenly diving into whole new languages and frameworks designed specifically for AI tasks, while companies are reconfiguring team structures to better integrate AI methods.

Table of Contents

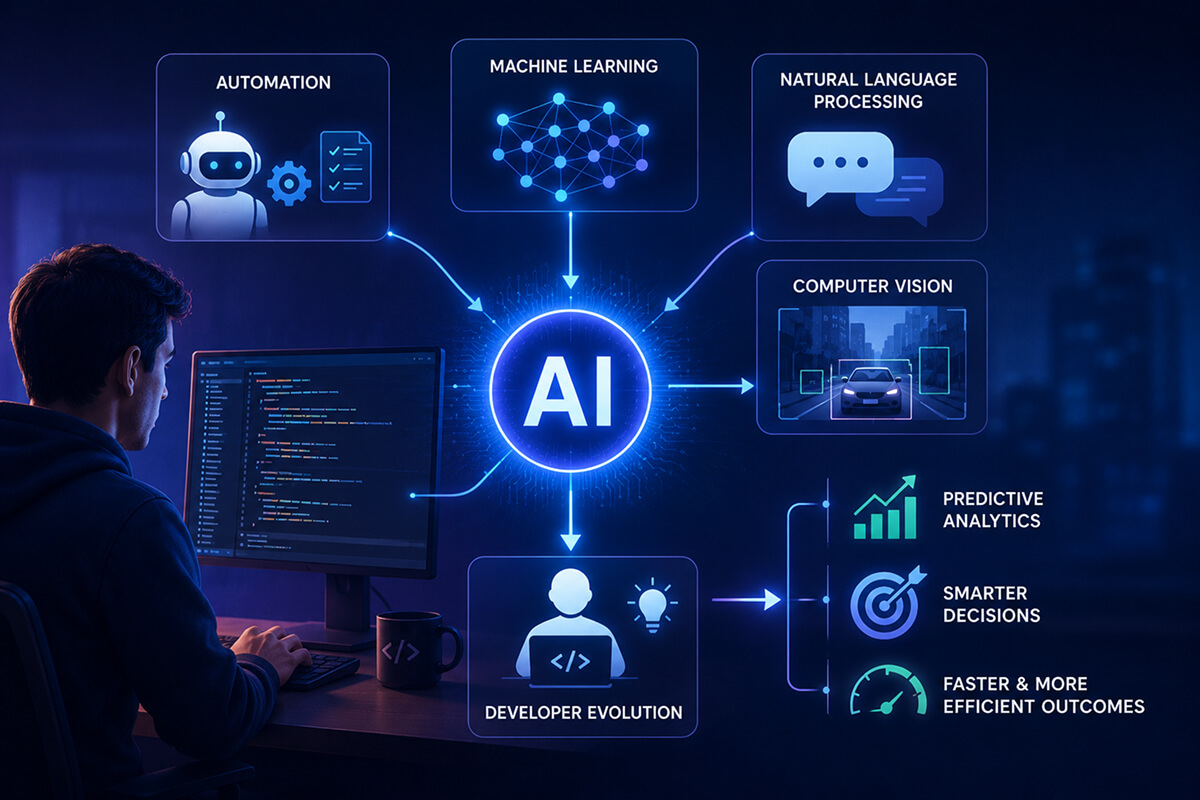

AI’s impact on software development? It’s huge. It amps up productivity by automating tedious tasks and enables complex data analysis with machine learning. This surge forward is fueled by breakthroughs in AI algorithms and the incorporation of technologies like natural language processing and computer vision into everyday applications. So, why is this such a big deal? Because it shifts the daily demands on developers-it’s rewriting what they need to learn and do. Traditional skills don’t cut it anymore; understanding AI’s intricacies and how they affect software is now key. AI’s role in predictive analytics and decision-making processes has turned business operations on their head, leading to quicker, more efficient outcomes. Sound familiar?

AI isn’t just a tool—it’s a transformative collaborator that’s driving the software development landscape toward ever-increasing intelligence and automation.

But traditional software development? It’s hit some serious snags. Developers often grapple with inefficiencies that stifle scalability-issues AI can tackle head-on through smart automation and streamlining processes. This evolution means developers must quickly get the hang of AI and machine learning. AI skills are no longer a nice-to-have; they’re essential to keeping up with the demand for more intelligent, efficient software solutions. AI-driven automation can handle tasks in seconds that used to take hours, giving developers time for more strategic work. The pressure is on for developers to get fluent in AI fast if they want to stay in the game.

Traditional development methods-think manual coding and stiff processes-often lead to sluggish iteration cycles and postponed releases. When faced with massive data and the urgent need for agile adaptation, these methods just can’t keep up. AI-powered solutions simplify workflows, automating tasks traditionally bogging down development. For example, AI can handle test generation, bug tracking, and even code writing, cutting down the time needed for each software cycle. Well, actually, that’s not quite right-what we mean is it transforms the entire process. Transitioning to this model means overcoming hurdles like ensuring system compatibility and tackling data quality issues.

Designing a system that integrates AI demands careful thought and execution. Look, it’s not just about throwing a neural network onto existing software. The key is to integrate AI components smoothly into your architecture, ensuring it can scale and stay flexible. This adaptability is crucial for managing the dynamic nature of AI models and their ever-changing datasets. We often find ourselves rethinking old architectural paradigms to fit AI’s specific needs. For instance, AI’s unpredictable data requirements demand a design that can quickly scale horizontally. This might mean using container orchestration tools like Kubernetes to efficiently manage AI workloads.

When you’re designing AI-integrated systems, focus on creating modular and flexible structures. Architectural patterns like microservices work wonders, allowing AI components to be updated or swapped out without a full system redo. Another factor is workflow integration-AI needs to be part of the development lifecycle from the start, not an afterthought. These best practices ensure that AI-powered systems remain efficient and flexible. Microservices architecture, for example, is a great fit for AI’s dynamic nature and supports parallel development, enabling teams to work on different components at the same time without getting in each other’s way (yeah, really). Using cloud-native technologies ensures AI models can be deployed and scaled without making huge infrastructural changes, making it easier to handle varying computational demands.

Now, setting up AI in software? It’s not as intimidating as it sounds. Here’s a straightforward roadmap to help developers integrate AI models effectively. The thing is, successful implementation calls for both strategic planning and tactical execution, both crucial for smooth AI integration into workflows.

Each step needs its own attention to detail-cutting corners can lead to less-than-ideal performance or failure. Just last month, we skipped data normalization, and it skewed our outputs. Not even close to ideal. That experience pushed us to set up strict data preprocessing protocols in our workflows.

Integrating AI models into existing systems often means creating a bridge between traditional software components and fresh AI algorithms. This involves refactoring code to handle AI inputs and outputs, ensuring smooth communication between all parts of the system. Often, this requires middleware to manage data translation and process orchestration. AI models work on a different logic level compared to traditional software, so setting up clear communication protocols is crucial. Successful integration also demands continuous monitoring to ensure AI components keep up performance standards as they evolve.

Here’s the thing-let’s dig into a practical example of how you can set up an AI model using Python and TensorFlow. This code snippet demonstrates a basic image classification task. We opted for TensorFlow because of its knack for handling deep learning tasks and its strong community backing, which offers tons of resources for learning and troubleshooting.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

# Load example data

(x_train, y_train), (x_test, y_test) = keras.datasets.cifar10.load_data()

# Normalize data

x_train, x_test = x_train / 255.0, x_test / 255.0

# Define model architecture

model = keras.Sequential([

layers.Conv2D(32, (3, 3), activation='relu', input_shape=(32, 32, 3)),

layers.MaxPooling2D((2, 2)),

layers.Flatten(),

layers.Dense(64, activation='relu'),

layers.Dense(10, activation='softmax')

])

# Compile model

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy'])

# Train model

model.fit(x_train, y_train, epochs=10, batch_size=32, validation_split=0.2)

# Evaluate model

test_loss, test_acc = model.evaluate(x_test, y_test)

print(f"Test accuracy: {test_acc}")

This example uses a convolutional neural network to classify images from the CIFAR-10 dataset. Note the use of data normalization and a simple yet effective model architecture. When coding AI solutions, aim for clarity and modularity to ensure maintainability and scalability. It’s also crucial to keep an eye on the model’s performance and iteratively refine the architecture based on feedback and new data insights.

Choosing the right tech stack for AI-powered development is crucial. It determines how efficient and effective your software will be. Popular frameworks like TensorFlow and PyTorch are favorites for their extensive libraries and strong community backing. But why these tools? Because they offer both flexibility and solid functionality that cater to developers of all levels. They provide a solid foundation for building, training, and deploying sophisticated AI models tailored to specific business needs.

Tried-and-true AI frameworks offer extensive modularity and compatibility with other technologies, making them ideal for building flexible solutions. For example, TensorFlow provides an ecosystem that supports everything from model training to deployment, while PyTorch’s dynamic design makes it appealing for research and experimentation. PyTorch’s intuitive setup simplifies debugging during development, which is priceless for rapid prototyping. On the flip side, TensorFlow’s comprehensive ecosystem is a go-to for production environments, especially when paired with TensorFlow Extended (TFX) for deploying solid, flexible models.

Other technologies such as cloud computing platforms (AWS, Google Cloud) and big data tools (Hadoop, Spark) complement these frameworks, enabling efficient management of large datasets and complex computations. The tools you choose shape both current capabilities and future adaptability of AI solutions. For instance, pairing TensorFlow with Kubernetes permits flexible deployment capable of handling fluctuating workloads typical in machine learning operations. This kind of flexibility is crucial as data grows and real-time processing becomes standard.

Performance metrics are the backbone of any successful AI system. Analyzing these metrics unveils insights into latency and efficiency enhancements needed for best system performance. It’s more than just achieving high accuracy; it’s about crafting a responsive, reliable system that can scale according to demand.

Key metrics include processing speed, memory usage, and accuracy. A well-tuned AI model should handle data in milliseconds while maintaining high accuracy. But these aren’t the only metrics. Real-world performance data shows that response time can often be improved by up to 40% with optimization techniques like model pruning and quantization. At PixlerLab, we meticulously track these metrics, ensuring our AI solutions not only meet but exceed industry standards. A focus on continuous improvement and adaptation helps maintain high performance even as demands shift.

And monitoring model drift is essential-tracking how the model’s accuracy changes as new data comes in. By implementing continuous evaluation pipelines, developers can ensure models stay effective as underlying data patterns evolve. This proactive approach is vital for maintaining competitive advantage in fast-paced industries.

AI software development is fraught with potential pitfalls. Many developers, especially those new to AI, repeat common mistakes that could be easily sidestepped with a bit of foresight and planning. Avoiding these can prevent costly revisions and deployment delays.

Common mistakes include failing to properly preprocess data, overfitting models to training data, and skipping performance monitoring in deployed AI systems. These missteps can lead to unreliable outputs and sub-par performance. A notable example from our experience was when a client’s initial disregard for data validation landed us in lengthy debugging sessions. To sidestep these pitfalls, always validate data rigorously, use cross-validation methods, and set up continuous monitoring systems. Learning from past projects and mistakes is priceless in the development journey.

Also, another typical issue is the lack of collaboration between AI engineers and domain experts. Ensuring clear communication and understanding between these groups is crucial for aligning AI models with real-world needs and expectations. Fostering such collaboration leads to AI models that aren’t just accurate but also contextually relevant and practical.

The versatility of AI-powered software is evident in its wide-ranging applications across industries. From healthcare to finance, AI enhances capabilities and introduces efficiencies previously unimaginable. Its role in transforming industries is undeniable, offering tools to tackle complex problems that were once seen as insurmountable.

In healthcare, AI aids in diagnostics and predictive analytics, boosting patient outcomes. Algorithms can assess medical images with precision on par with-or sometimes exceeding-human experts. In finance, AI is employed for fraud detection and automated trading, enhancing security and efficiency. AI systems can sift through transaction data in real-time to spot anomalies indicative of fraud. In retail, AI is use for personalized marketing and demand forecasting. Each use case showcases AI’s knack for turning industry-specific challenges into opportunities.

At PixlerLab, we’ve embarked on projects across these sectors, customizing AI solutions to each industry’s unique demands. This adaptability of AI underscores its transformative potential. Take our work in logistics, for instance, where AI has optimized supply chains, trimming delivery times and boosting customer satisfaction. Similarly, in energy, AI models forecast equipment failure, enabling timely maintenance and minimizing downtime. These instances highlight the breadth of AI’s applicability and its role in driving operational excellence.

Diving into AI Development requires a mix of skills, like programming (Python, R), a grasp of machine learning frameworks (TensorFlow, PyTorch), data analytics, and domain-specific expertise. Plus, an understanding of AI ethics is becoming increasingly important. Developers must be able to tackle both the technical and ethical sides of AI development, ensuring solutions are sustainable and responsible.

AI transforms software development by automating routine tasks and bolstering decision-making processes. It shifts the focus from manual coding to strategic problem-solving, letting developers create more intelligent, adaptive systems. The integration of AI fosters innovation, pushing the boundaries of what current tech can achieve and enabling breakthroughs in various fields. Ethical considerations in AI encompass ensuring transparency, fairness, and accountability in AI systems. Developers must also prioritize data privacy and counter algorithmic bias, striving for responsible AI use. Addressing these concerns is vital for maintaining public trust and guaranteeing AI technologies contribute positively to society.

AI is reshaping software development in profound ways, creating new opportunities and presenting challenges for developers. The main takeaway? Keeping up with and embracing technological advances is crucial. AI’s influence will only grow, and staying ahead demands welcoming change and innovation. Developers need to nurture an attitude open to integrating AI into all aspects of software development, ensuring they remain at the modern of tech advancements.

We see a future where AI is woven into every aspect of software development. Developers who specialize in AI will lead this evolution, molding the next wave of intelligent, adaptive software solutions. At PixlerLab, we’re thrilled to be part of this journey and excited about what’s coming. As AI keeps evolving, it promises to revolutionize industries and redefine tech possibilities, offering endless opportunities for those ready to explore its potential.

Looking to integrate AI into your software solutions? At PixlerLab, we offer expert AI development services tailored to your business needs. Whether it’s optimizing existing systems or building new AI-powered applications, our team is ready to help. Contact us today to explore how our solutions can benefit your business.

Claude Development

May 8, 2026

Learn how to select the best AI MVP development agency for your startup and understand the importance of…

Claude Development

May 4, 2026

Explore how AI is transforming software development, enhancing efficiency with AI-powered solutions.

Claude Development

Apr 15, 2026

Discover top AI development agencies in Australia and learn how to choose the right partner for AI projects.